3D-RCNN

Instance-level 3D Object Reconstruction via Render-and-Compare

Abstract

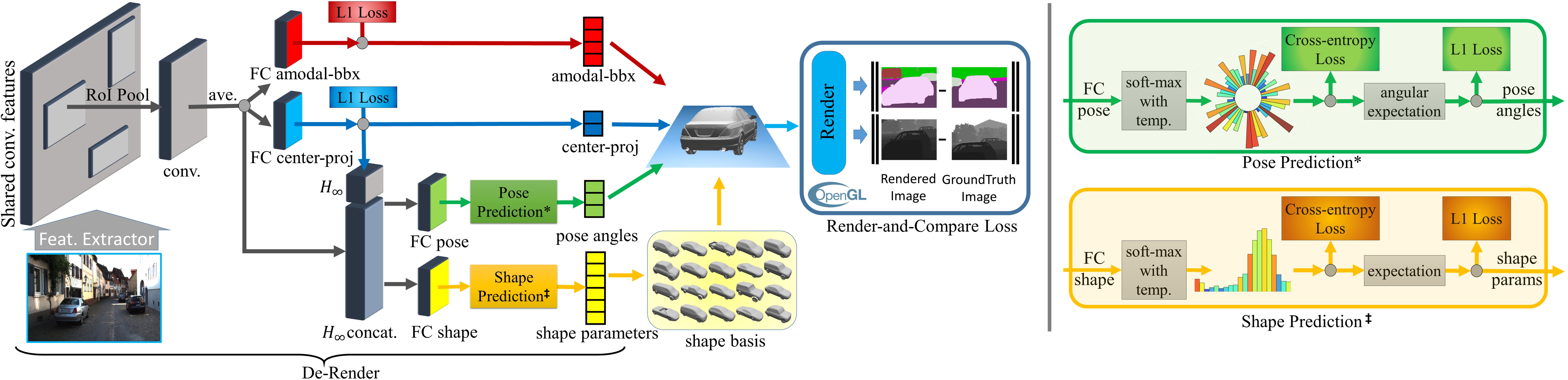

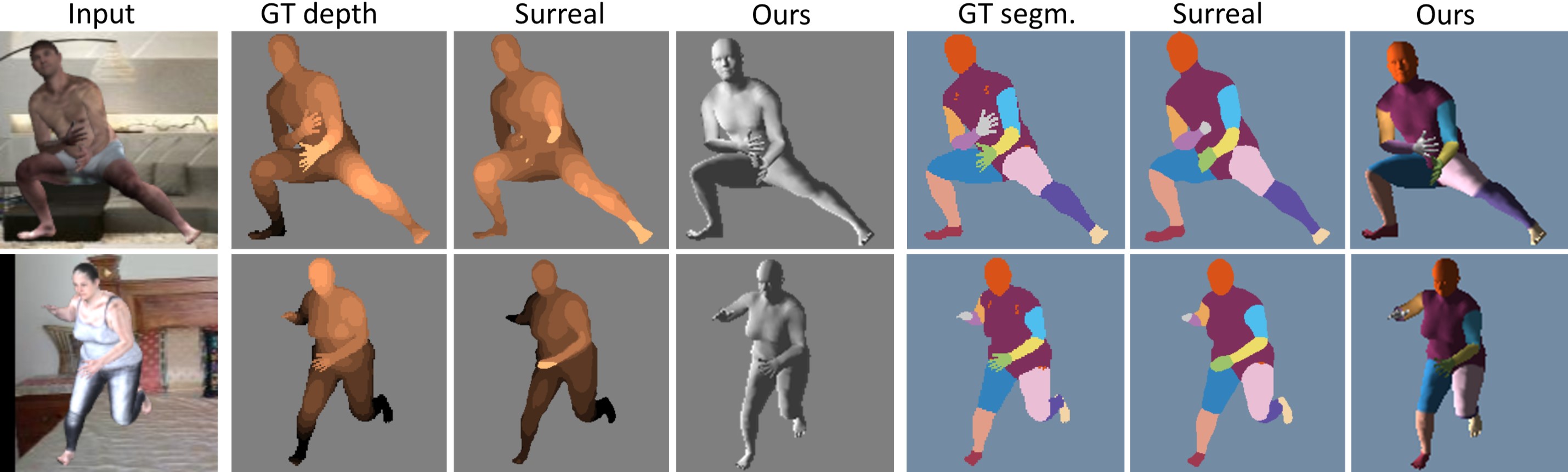

We present a fast inverse-graphics framework for instance-level 3D scene understanding. We train a deep convolutional network that learns to map image regions to the full 3D shape and pose of all object instances in the image. Our method produces a compact 3D representation of the scene, which can be readily used for applications like autonomous driving. Many traditional 2D vision outputs, like instance segmentations and depth-maps, can be obtained by simply rendering our output 3D scene model. We exploit class-specific shape priors by learning a low dimensional shape-space from collections of CAD models. We present novel representations of shape and pose, that strive towards better 3D equivariance and generalization. In order to exploit rich supervisory signals in the form of 2D annotations like segmentation, we propose a differentiable Render-and-Compare loss that allows 3D shape and pose to be learned with 2D supervision. We evaluate our method on the challenging real-world datasets of Pascal3D+ and KITTI, where we achieve state-of-the-art results.

Method

Results

Downloads

Citation

For attribution in academic contexts, please cite this work as

@inproceedings{3DRCNN_CVPR2018, author = {Kundu, Abhijit and Li, Yin and Rehg, James M.}, title = {3D-RCNN: Instance-level 3D Object Reconstruction via Render-and-Compare}, booktitle = {CVPR}, year = {2018}, doi = {10.1109/CVPR.2018.00375}, url = {https://doi.org/10.1109/CVPR.2018.00375}, }