Panoptic Neural Fields

A Semantic Object-Aware Neural Scene Representation

Video

Abstract

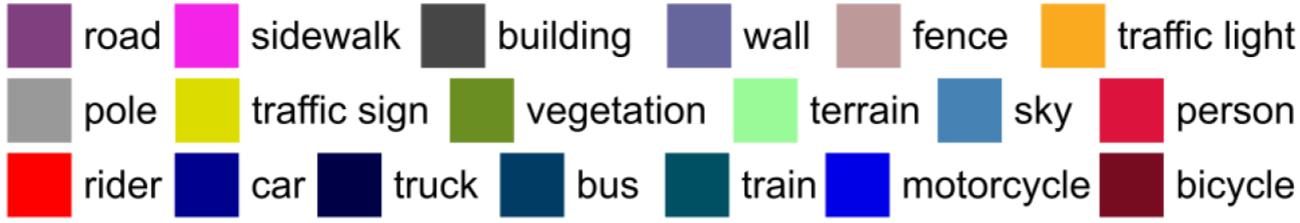

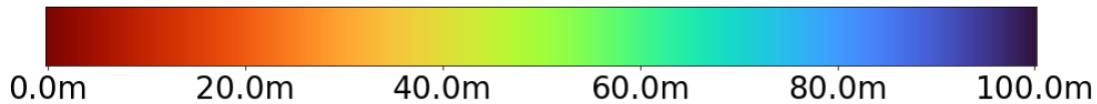

We present Panoptic Neural Fields (PNF), an object-aware neural scene representation that decomposes a scene into a set of objects (things) and background (stuff). Each object is represented by an oriented 3D bounding box and a multi-layer perceptron (MLP) that takes position, direction, and time and outputs density and radiance. The background stuff is represented by a similar MLP that additionally outputs semantic labels. Each object MLPs are instance-specific and thus can be smaller and faster than previous object-aware approaches, while still leveraging category-specific priors incorporated via meta-learned initialization. Our model builds a panoptic radiance field representation of any scene from just color images. We use off-the-shelf algorithms to predict camera poses, object tracks, and 2D image semantic segmentations. Then we jointly optimize the MLP weights and bounding box parameters using analysis-by-synthesis with self-supervision from color images and pseudo-supervision from predicted semantic segmentations. During experiments with real-world dynamic scenes, we find that our model can be used effectively for several tasks like novel view synthesis, 2D panoptic segmentation, 3D scene editing, and multiview depth prediction.

Applications

Panoptic Neural Fields can be used for several applications shown below.

Results

We evaluate or method on KITTI

Results on (dynamic) KITTI scenes

Novel view synthesis results on KITTI-360

Scene editing

Since our proposed panoptic neural field scene representation is object aware, it allows seamless manipulation and editing of different objects present in the scene by simply changing object pose or MLP parameters. We show some examples of scene editing below.

Citation

For attribution in academic contexts, please cite this work as

@inproceedings{KunduCVPR2022PNF, title = {{Panoptic Neural Fields: A Semantic Object-Aware Neural Scene Representation}}, author = {Kundu, Abhijit and Genova, Kyle and Yin, Xiaoqi and Fathi, Alireza and Pantofaru, Caroline and Guibas, Leonidas and Tagliasacchi, Andrea and Dellaert, Frank and Funkhouser, Thomas}, booktitle = {CVPR}, year = {2022}, }